1-800-AI - How to Use an LLM from your dumb phone

Background

The year was 2007. I drove a 1987 Ford F-150, carried a Qwest wireless flip phone, and somehow I got everywhere I needed to go and did all the things I needed to do without Google Maps, ChatGPT, or any of the other apps we rely on so heavily today.

A few years ago, I took an unintended sabbatical from my smartphone and switched to a so-called dumb phone.

I wish I could say I possessed some sort of unique insight into where the conversation around digital minimalism was going. However, the true story is that, like many realizations, it was completely accidental.

One day I clumsily dropped my smartphone in water, but needed access to a working phone immediately. The only device I had on hand was an old flip phone, which I intended to use for the rest of the day until I could get over to the store for a replacement.

I was suprised by the almost immediate decrease in stress I felt from not having the smartphone. I’ve always been susceptible to scrolling, but I had never stopped to think about how carrying a small computer on me all of the time had changed the way that I related to the world.

If I was lost, I didn’t think about how to get to where I was going, I asked Google. If I wanted to know what an object was, why something happened, what the name of that celebrity I had forgotten was, I could look it up instantly. While this is an amazing tool, I was building a shorter attention span and an expectation of immediate gratification over and over again, day after day.

Over the next few months I spent a lot more time looking up, and realized how much time the world is spending looking down. I started to read for fun again for the first time in several years, I wasn't feeling hyper stimulated and stressed from the news. I felt more relaxed about taking a wrong turn or not knowing the exact location of something - I just felt less anxious overall.

The Problem

One of the biggest adjustments to not carrying a smartphone was the lack of support systems that had existed just 15 years earlier for gathering information when you were out of the house and didn't have a computer in your pocket.

What if I was already out and about, but I needed to get an address or phone number for some place? What if I wanted to go to the movies, was I going to drive home just to look up showtimes?

This made using a “dumb phone" as my primary device unrealistic, and it pushed the work my smartphone was doing onto the other people around me. Who wants to be that person?

I began to reflect on how much these systems have changed in such a short period of time. There used to be a whole network of support for people outside of their house through 1-800 numbers and various phone systems. Some of these still exist, but the one I really wanted was long gone. Moviefone.The 1800 number for finding out movie showtimes near you.

So, I began to dream up a solution that would take the best parts of new technology and make it accessible through some older tech.

The Solution

Introducing: 1-800-ASKAI...

Okay, I wasn’t actually able to get a cool 1-800 phone number set up, but I did create the proof of concept here. If you are interested you can build your own version very easily.

Goal:

Create a phone number I could call to ask Google Gemini questions like when a movie showtime is, or what the cross streets are for a business I want to visit.

I set out vibecoding this with my good friend ClaudeAI and pretty quickly came up with the rough structure of the project. I would need the following core components:

Twilio - Handles the actual phone calls, converts speech to text, and text back to speech

ngrok - Tunnels your local server to the internet so Twilio can reach it (gives you a public URL)

Gemini API - The AI brain that answers questions with web search capability The Code (two Python scripts):

- simple_ivr_test.py - Flask server with call routing and conversation history storage

- gemini_helper.py - Handles Gemini API communication and manages conversation context

** Call flow: Phone Call → Twilio → ngrok → Flask App → Gemini Helper → Gemini API ↓ ↓ (stores history) (gets answers)

One of my favorite things about writing code to grab information from the Gemini API is that you can write it in plain language since it's an LLM.

My initial instructions yielded very long-winded answers and it often did not know when to ask appropriate follow up questions. It was also confidently delivering wrong information and not always searching the internet, but instead relying on past information it had learned. Essentially all of the shortcomings you expect from an LLM.

So I updated the system instructions to include things like

"You are a dedicated, voice-only assistant for users on a basic, non-internet-enabled (dumb) phone. "

"Your core task is to be brief, precise, and conversational to minimize listening time and cognitive load. "

"The user is relying on voice prompts only."

"

Always adhere to the following rules:"

"

1. General Tone: Be friendly, brief, and immediately address the user's query. Do not offer extraneous information."

"

2. Confirmation/Repetition: After providing a factual answer, do NOT ask if they want it repeated - the IVR will handle follow-up questions."

"

Specific Service Rules:"

The IVR had its own issues with understanding the words stated correctly, and occasionally dropping the call. All the shortcoming you expect from an IVR, but not much to be done there for this approach to the project.

However, despite this project capturing the worst elements of both IVRs and LLMs, I was able to create something workable that solved the problems I had.

Why Gemini?

There are many LLMs to pick from for an API here, but the main reason I went with the GeminiAPI instead of Claude or ChatGPT was because of its ability to search the internet and utilize other Google services to quickly gather information. My goal here after all is to get simple instructions like a businesses cross streets, hours of operation, or movie showtimes. Things Google does exceedingly well through its search engine.

Testing the IVR

With all four components in place, the steps for the call are really quite simple, and after some updates its very good at getting me the information I want quickly.

1.) Call phone number

2.) The IVR answers "Hello! Welcome to the voice information service. What would you like to know?"

3.) Ask a question (and hope the IVR hears it correctly)

4.) Await your answer(s)!

5.) Repeat steps 3 and 4 until you have all the information you need.

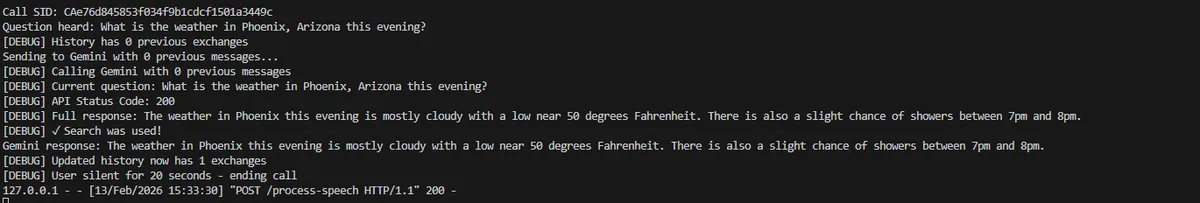

After a few tests I was able to easily get showtimes from the theater I wanted and find out the weather for the day. Each new call resets the memory, but if I wanted to ask subsequent questions within the same call, it would remember the context of what I had previously mentioned and factor that into it's answer.

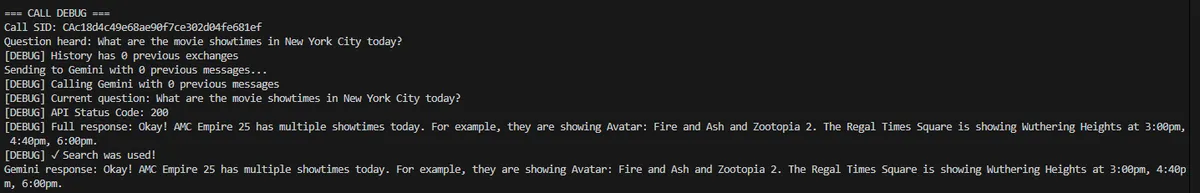

The input and output on the script in the terminal look like this for a question about movie showtimes:

Here is an example of how it responds to a basic question about the weather:

Next Steps To deploy this to the world at large would require more than a simple test account with Twilio and a development server. However, my pie in the sky idea is a novelty product of AI calling cards.

The idea being that you could buy a preloaded calling card and then the GeminiAPI would deduct the cost of the queries from your account as you call into the system.

Overall this project was more about the fun of building it, than solving a real day to day use case for me currently. I am tied to my smartphone most days because of some non-negotiable applications that I haven't figured out how to connect to an IVR yet.

However, if you find this might solve a problem you have, I would encourage you to think about building your own.

If you want more posts about blending outdated technology with modern technology, feel free to sign up for the mailing list on the homepage.